Google Quantum Breakthrough Slashes Hardware Needed to Break RSA Encryption

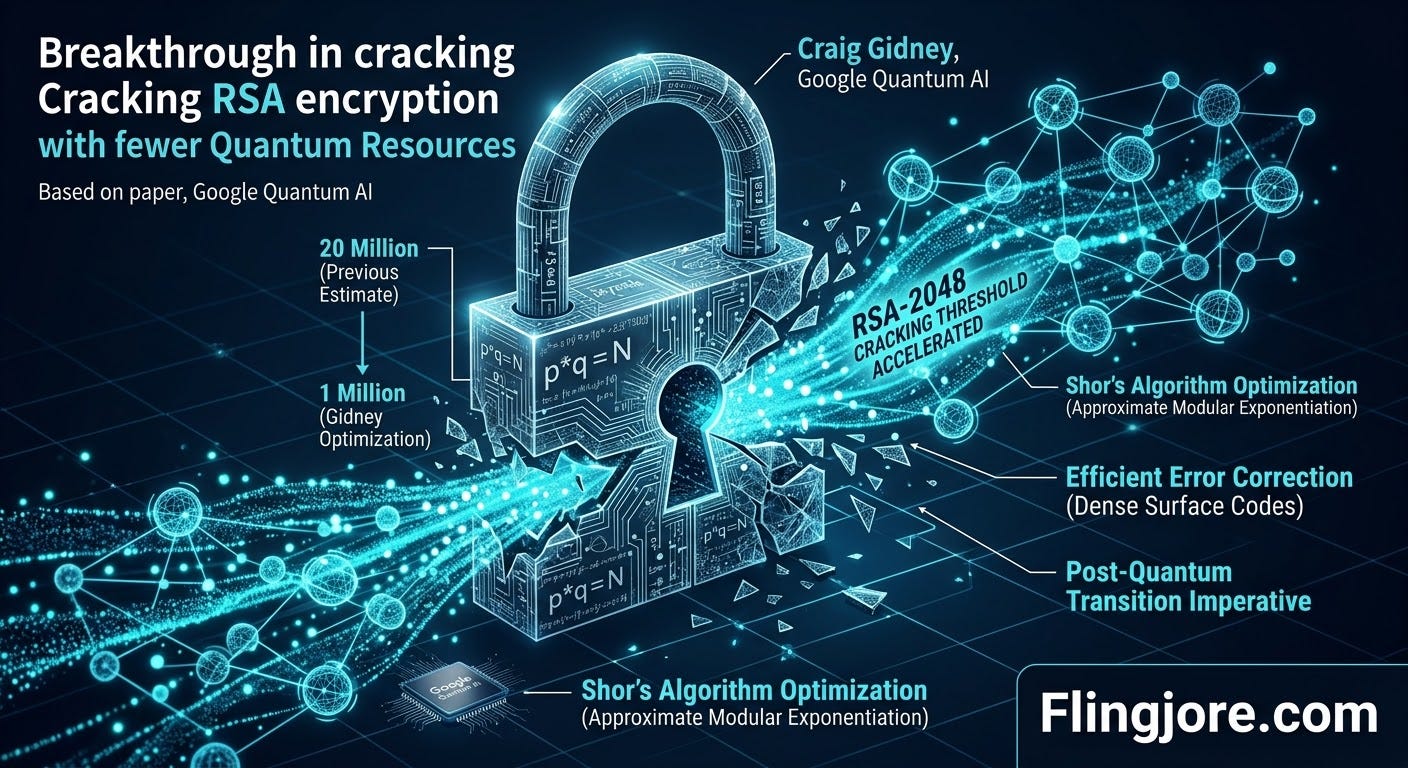

Researchers demonstrate how optimized algorithms reduce the threshold to crack internet encryption from 20 million physical qubits to fewer than one million.

The timeline for a quantum computing threat to global cybersecurity is rapidly compressing. A team of researchers at Google Quantum AI, led by Craig Gidney, outlines advances in quantum computer algorithms and error-correction methods that demonstrate how a quantum machine could crack Rivest-Shamir-Adleman (RSA) encryption keys with far fewer resources than previously estimated. The development suggests that the cryptographic foundation of the modern internet faces a credible threat much earlier than legacy projections indicate, prompting urgency among security experts to finalize and deploy next-generation, post-quantum encryption techniques.

The paper, published on the arXiv preprint server, details how an optimized quantum architecture reduces the hardware requirement for breaking a standard 2048-bit RSA key to fewer than one million physical qubits. Furthermore, the calculations show that a machine of this size can complete the decryption in just one week. This marks a staggering reduction from the 2019 consensus, which estimated that at least 20 million physical qubits would be required to achieve the same result in a reasonable timeframe.

The cascading effect of this research is already visible across the quantum physics sector. Building directly upon Gidney’s implementation of approximate residue number system arithmetic and improved surface codes, independent research teams are now accelerating the timeline even further. According to recent reports, parallel breakthroughs utilizing Quantum Low-Density Parity-Check (qLDPC) codes push the theoretical threshold well below the one-million-qubit mark, intensifying the global race toward cryptographic agility.

Understanding the Target: The Mechanics of RSA Encryption

To grasp the magnitude of the Google Quantum AI findings, one must first examine the architecture of the encryption standard under threat. Developed in the late 1970s by Ron Rivest, Adi Shamir and Leonard Adleman, RSA encryption remains one of the most widely deployed public-key cryptosystems in the world. It secures everything from online banking transactions and digital certificates to encrypted messaging platforms and secure email protocols.

RSA relies on asymmetric cryptography. It involves generating a pair of mathematically linked keys: a public key, which is openly shared and used to encrypt data, and a private key, which is kept secret and used to decrypt the data. The security of this system fundamentally relies on the mathematical difficulty of integer factorization.

When a user generates an RSA key pair, the algorithm multiplies two massive prime numbers together to create an even larger composite number, known as the modulus. This modulus forms the basis of the public key. While it is computationally trivial for a standard classical computer to multiply two large prime numbers together, the reverse operation — taking the massive composite number and figuring out which two primes were multiplied to create it — is computationally infeasible for classical machines.

Current industry standards mandate the use of a 2048-bit encryption key. For a classical supercomputer utilizing the most efficient known classical factoring algorithm (the General Number Field Sieve), finding the prime factors of a 2048-bit number would take millions of years. This intractable mathematical asymmetry is the bedrock of modern digital trust.

However, quantum computers do not operate on classical logic. While classical computers process information in binary bits (representing strictly a 0 or a 1), quantum computers utilize quantum bits, or qubits. Qubits leverage the principles of quantum mechanics — specifically superposition and entanglement — to represent multiple states simultaneously and perform complex multidimensional calculations.

In 1994, mathematician Peter Shor developed Shor’s algorithm, a quantum algorithm explicitly designed to find the prime factors of large integers exponentially faster than classical algorithms. If a sufficiently large and stable quantum computer executes Shor’s algorithm, the mathematical fortress of RSA crumbles, turning a million-year classical computing problem into a task that takes mere days or hours.

The Evolution of Qubit Requirements: From 20 Million to One Million

For decades, the threat of Shor’s algorithm remains purely theoretical. Quantum hardware development is notoriously difficult. Qubits are highly susceptible to environmental noise, temperature fluctuations and electromagnetic interference. This instability leads to a phenomenon known as decoherence, where the quantum state collapses, resulting in calculation errors.

To run a complex algorithm like Shor’s on a 2048-bit integer, a quantum computer requires “logical qubits” — highly stable, error-corrected qubits. Because physical qubits are prone to errors, quantum engineers group hundreds or thousands of physical qubits together to create a single, reliable logical qubit. This massive overhead is why researchers historically view the quantum threat as a distant, futuristic concern.

In 2019, Craig Gidney and his collaborator Martin Ekerå published a landmark paper estimating that breaking RSA-2048 requires 20 million physical qubits running for eight hours. At the time, the largest quantum computers possess fewer than 100 physical qubits. The 20-million-qubit figure provides a false sense of security for the cybersecurity industry, implying a decades-long runway before a Cryptographically Relevant Quantum Computer (CRQC) materializes.

The latest research from Google Quantum AI completely shatters that timeline. Gidney’s new paper demonstrates that through highly optimized algorithms and novel error-correction architectures, the resource requirement falls below one million qubits.

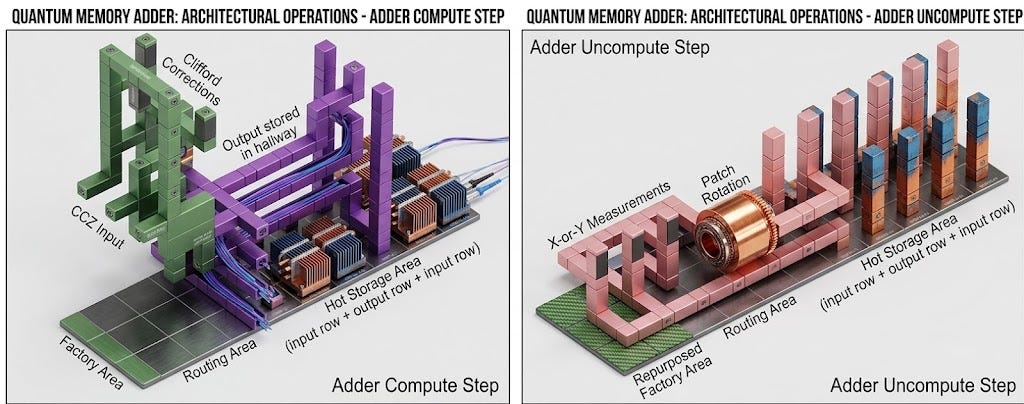

The team achieves this by developing more efficient algorithms that build on approximate modular exponentiation. In traditional computing, arithmetic operations must be perfectly precise. Gidney’s approach utilizes approximate residue number systems, streamlining the mathematical operations required within Shor’s algorithm and drastically reducing the number of logical operations the computer must perform.

Simultaneously, the Google team implements denser error-correction models. They utilize “yoked” surface codes, which improve the idle storage of qubits. This advancement reduces the ratio of physical qubits needed to support a single logical qubit, allowing the system to do far more with a smaller hardware footprint.

While a one-million-qubit machine does not yet exist — current leading machines, such as those produced by IBM, Google and neutral-atom startups, hover in the hundreds or low thousands of physical qubits — the reduction in required resources alters the strategic landscape. Gidney notes in the paper that while immense engineering challenges remain, the pace of optimization indicates that security experts no longer have the luxury of time.

The Ripple Effect: Further Hardware Reductions

The Google Quantum AI breakthrough acts as a catalyst for the broader quantum research community, triggering a cascade of secondary discoveries that further lower the hardware threshold. The momentum shifts the scientific focus from “when will we build 20 million qubits?” to “how efficiently can we arrange 100,000 qubits?”

A preprint study published in February 2026 by researchers at Iceberg Quantum in Sydney builds directly upon Gidney’s May 2025 algorithm implementation. The Sydney team introduces the “Pinnacle Architecture,” arguing that by abandoning traditional surface codes in favor of Quantum Low-Density Parity-Check (qLDPC) codes, the hardware requirement to break RSA-2048 drops to roughly 100,000 physical qubits.

In past architectures, physical qubits can only interact with their immediate, adjacent neighbors on a two-dimensional grid. The qLDPC code framework allows for non-local interactions, meaning qubits can interact with other qubits located further away across the processor array. This vastly increases connectivity and information density.

The Iceberg Quantum paper calculates that with a 98,000-qubit superconducting system, RSA encryption falls in about one month of continuous runtime. To break it in a single day requires 471,000 physical qubits.

Simultaneously, a team of quantum physicists at the California Institute of Technology leverages neutral atom technology, publishing a design capable of breaking encryption with tens of thousands of qubits over a longer timeframe.

University of Texas computer scientist Scott Aaronson, a prominent voice in quantum complexity theory, evaluates these plunging estimates on his Shtetl-Optimized blog. Aaronson confirms the theoretical soundness of the resource reduction, though he tempers expectations regarding the immediate engineering realities.

“Yes, this is serious work. The claim seems entirely plausible to me,” Aaronson writes. “I have no idea by how much this shortens the timeline for breaking RSA-2048 on a quantum computer... I, for one, had already ‘baked in’ the assumption that further improvements were surely possible by using better error-correcting codes. But it’s good to figure it out explicitly.”

Aaronson also notes the immense difficulty of engineering qLDPC codes in superconducting systems compared to traditional surface codes, pointing out that “you need wildly nonlocal measurements of the error syndromes.” Despite the engineering hurdles, the mathematical proofs hold firm: the encryption barrier is undeniably lowering.

The Good: Constructive Applications of the Technology

While the headline-grabbing aspect of Gidney’s research focuses on the destruction of RSA encryption, the underlying algorithmic and error-correction breakthroughs offer profound benefits for human progress. A quantum computer capable of efficiently running Shor’s algorithm is also capable of running highly complex simulations that remain entirely inaccessible to classical supercomputers.

The optimizations developed by Google Quantum AI, alongside the qLDPC code architectures, directly translate to the simulation of quantum materials and quantum chemistry. Classical computers struggle to simulate molecules because the number of electron interactions grows exponentially with every atom added to the simulation. A quantum computer, operating naturally according to quantum mechanics, bypasses this bottleneck.

Lawrence Cohen of Iceberg Quantum emphasizes this dual-use nature in an interview with Veritas News. He points out that RSA factoring acts as a universal benchmark for processing power, but the exact same architecture drives unprecedented scientific discovery.

“He says that breaking RSA encryption is a well-studied problem and therefore a great benchmark for anyone looking to build a powerful quantum computer, but his team’s approach could also be used to run better and more useful simulations of quantum materials and quantum chemistry,” the Veritas report notes.

Specifically, these highly efficient, million-qubit (or smaller) architectures excel at calculating the Fermi-Hubbard model. This model is a theoretical framework in condensed-matter physics used to describe the behavior of electrons in a lattice structure. Successfully simulating the Fermi-Hubbard model is considered the holy grail for understanding high-temperature superconductivity.

If researchers use these advanced algorithms to discover materials that conduct electricity without resistance at room temperature, it revolutionizes the global energy grid, creates frictionless transportation systems and slashes global power consumption. Furthermore, the ability to perfectly simulate complex molecules accelerates drug discovery, allowing pharmaceutical companies to design targeted therapies for diseases at the atomic level in mere hours, bypassing years of physical laboratory trials. The agricultural sector benefits as well, as quantum simulations can unlock new, energy-efficient methods for fixing nitrogen to create fertilizers, drastically reducing the carbon footprint of global food production.

The Bad: Cybersecurity, Malicious Actors and ‘Harvest Now, Decrypt Later’

Conversely, the exact same hardware scaling reductions present an immediate, asymmetric threat to global security. The acceleration of the quantum timeline validates the deepest fears of intelligence agencies, financial institutions and cybersecurity professionals.

The primary danger does not wait for a fully functional, million-qubit machine to come online. The threat actively unfolds today through a strategy known as “Harvest Now, Decrypt Later” (HNDL) or “Store Now, Decrypt Later” (SNDL).

Nation-state adversaries, state-sponsored hacking syndicates and sophisticated cybercriminals are actively intercepting and stockpiling massive volumes of encrypted internet traffic. Because storage is cheap, these entities scrape heavily encrypted data — ranging from classified military communications and diplomatic cables to proprietary corporate secrets and biometric health data. Today, this data remains an unreadable ciphertext. However, the perpetrators hoard it with the explicit knowledge that the Google Quantum AI breakthroughs make a future decryption machine inevitable.

When the hardware eventually catches up to Craig Gidney’s theoretical algorithms, these adversaries retroactively decrypt the stockpiled data. For information with a long shelf life — such as intelligence operative identities, nuclear weapon designs, or citizens’ genetic data — a decryption event occurring a decade from now remains catastrophic.

Nadia Heninger, a prominent cryptographer, argues strongly for the immediate and open publication of algorithmic breakthroughs like Gidney’s, exactly because it forces the world to confront the reality of the threat. On Aaronson’s blog, he recalls Heninger’s stance: “Nadia’s arguments updated me in the direction of saying that groups with further improvements to the resource requirements for Shor’s algorithm should probably just go ahead and disclose what they’ve learned... balanced against the loud, clear, open warning the world will get to migrate faster to quantum.”

Beyond retrospective decryption, a real-time CRQC fundamentally undermines the digital economy. If an adversary wields a one-million-qubit machine capable of breaking RSA-2048 in a week, they can seamlessly forge digital signatures. This allows hackers to impersonate software update servers, pushing malicious code disguised as legitimate operating system updates to millions of devices simultaneously without triggering security alarms. It allows the complete bypass of secure socket layer (SSL/TLS) protections, turning safe online banking portals into transparent funnels for financial theft.

The Cryptocurrency Vulnerability

While RSA governs much of traditional digital infrastructure, the acceleration of quantum algorithms poses a particularly acute threat to the cryptocurrency ecosystem, including Bitcoin and Ethereum.

Cryptocurrencies do not typically rely on RSA. Instead, they utilize a different cryptographic standard known as Elliptic Curve Cryptography (ECC), specifically the secp256k1 curve. ECC provides stronger security than RSA for a given key length. For classical computers, a 256-bit ECC key offers comparable security to a 3072-bit RSA key. This efficiency makes ECC ideal for blockchains, where minimizing transaction data size is critical.

However, against a quantum computer running Shor’s algorithm, ECC is actually structurally weaker than RSA. Shor’s algorithm exploits the mathematical structure of elliptic curves with far fewer logical qubits than it requires for prime factorization.

A whitepaper published by Google Quantum AI in late March 2026 explicitly outlines this vulnerability. The report states: “First, in pursuit of efficiency and scale many blockchains depend crucially on ECDLP-based cryptography which uses keys that are almost an order of magnitude smaller than those of RSA at a similar security level which means a smaller CRQC is required to break them.”

The researchers calculate that breaking a cryptocurrency wallet’s elliptic curve protections requires significantly fewer resources than breaking RSA-2048. If an attacker derives the private key from the public key stored on the blockchain ledger, they gain full custodial control over the victim’s digital assets. Given that the blockchain is immutable, fraudulent quantum-initiated transactions cannot be reversed. A rapid, unannounced breakthrough in quantum hardware scaling thus threatens to instantly drain billions of dollars of wealth from decentralized finance protocols before the networks have time to execute complicated hard forks to upgrade their cryptographic foundations.

The Skeptic’s View: Engineering Realities and Error Rates

A strict adherence to verification and factual reporting necessitates acknowledging the massive gulf between theoretical algorithmic reductions and physical hardware realities. While the math surrounding Gidney’s million-qubit model is sound, the physical execution remains a monumental engineering challenge.

Skeptics and hardware engineers emphasize that qubit quantity is only one half of the equation; qubit quality, defined by the error rate, is the ultimate bottleneck.

A commentator on a cybersecurity discussion board regarding the qLDPC breakthrough captures the engineering skepticism: “To be sure, the result remains conditional on several factors, including achieving sustained physical error rates at or below about one error in a thousand... Well, OK, but the biggest issue is that we can’t even approach that error rate. So yeah, it is interesting that we could use a lot less bits if we have some sort of noise breakthrough but this doesn’t seem to have any implications at the cybersecurity level.”

Currently, state-of-the-art superconducting qubits struggle to maintain stable error rates below the thresholds demanded by the algorithms. The physical machines must operate at temperatures near absolute zero, encased in massive dilution refrigerators. Scaling from a 1,000-qubit processor to a 100,000-qubit processor requires exponentially more complex wiring, control electronics and cooling power. Cross-talk — where manipulating one qubit accidentally alters the state of a neighboring qubit — plagues dense architectures.

Aaronson echoes this sentiment regarding the physical properties of the proposed systems: “I still wonder how we’ll reach 99.9% or 99.99% [fidelities] at scale for any qubit modality. The first IBM experiments are encouraging, but not reaching that level.”

Therefore, treating the Google Quantum AI paper as evidence that encryption fails tomorrow is factually inaccurate. However, ignoring the accelerating pace of algorithmic optimization because of current hardware limitations is a dangerous operational blind spot for security administrators.

Analytical Frameworks for the Cryptographic Transition

To fully understand the implications of this rapidly shifting landscape, security professionals and policymakers must view the developments through distinct analytical frameworks that isolate the underlying themes.

Framework 1: The Economics of Quantum Cryptanalysis

The reduction from 20 million qubits to one million qubits fundamentally alters the economics of cyber warfare. At 20 million qubits, constructing a CRQC requires a massive, multi-billion-dollar national effort comparable to the Manhattan Project. It restricts the capability strictly to apex superpowers. However, as algorithmic efficiency pushes the requirement down to 100,000 or fewer physical qubits, the financial and logistical barriers drop. The threat actor profile broadens from apex nation-states to middle-tier state actors, corporate espionage syndicates and eventually well-funded cybercriminal organizations operating highly sophisticated ransomware operations. The technological democratization of decryption power is the true systemic risk.

Framework 2: The Fallacy of Symmetrical Scaling

Historically, classical encryption maintains a symmetric defense strategy: as classical computers get faster, security professionals simply increase the key size. Migrating from 1024-bit RSA to 2048-bit RSA buys decades of security against classical threats at very little computational cost to the defender. Against a quantum adversary running an optimized Shor’s algorithm, this scaling defense collapses. Doubling the RSA key size to 4096 bits only slightly increases the required number of logical qubits, offering virtually zero long-term protection against a maturing quantum computer. The Google Quantum AI research proves that defenders cannot outrun the threat simply by making traditional keys longer; a complete paradigm shift in the mathematics of the encryption itself is required.

Framework 3: The Threat Vector of Network Inertia

The most severe vulnerability is not the quantum computer itself, but the institutional inertia surrounding software upgrades. Migrating global digital infrastructure to entirely new cryptographic standards is a logistical nightmare. It requires discovering every instance of RSA and ECC hidden in legacy codebases, updating physical hardware appliances, coordinating interoperability between different enterprise networks and ensuring that older devices do not completely break upon upgrade. The timeline for a full global migration to new encryption standards takes an estimated ten to fifteen years. If algorithmic breakthroughs like those driven by Craig Gidney cut the hardware development timeline down to five to seven years, a massive temporal vulnerability window opens where the weapons exist, but the shields are not yet fully deployed.

The Road to Post-Quantum Cryptography

The undeniable consensus drawn from the Google Quantum AI research, regardless of exact timeline disputes, is that the current cryptographic era is drawing to a close. Bill Buchanan, a prominent cryptography professor, notes the inevitability of the shift in a recent industry analysis.

“No matter what way the building of quantum computers goes, the risk is there and we must start to migrate towards PQC [Post-Quantum Cryptography] methods,” Buchanan states. “While RSA has existed for nearly five decades, its dominance is coming to an end, along with the end of ECC.”

In response to these advancing threats, global standardization bodies, primarily the United States National Institute of Standards and Technology (NIST), are formalizing Post-Quantum Cryptography (PQC) standards. These new algorithms — such as ML-KEM (Module-Lattice-Based Key-Encapsulation Mechanism) for secure key exchange and ML-DSA (Module-Lattice-Based Digital Signature Algorithm) for identity verification — rely on completely different branches of mathematics.

Unlike RSA, which relies on prime factorization, PQC algorithms rely on concepts like lattice-based cryptography and hash-based signatures. Currently, no known quantum algorithm — including Shor’s — can efficiently solve these mathematical puzzles, rendering them theoretically secure against both classical supercomputers and future millions-qubit quantum machines.

The immediate directive for technology companies, financial institutions and government agencies is to adopt a posture of “cryptographic agility.” This involves conducting exhaustive cryptographic inventories, identifying exactly where vulnerable RSA and ECC protocols are utilized within their networks and preparing software architectures to seamlessly hot-swap vulnerable algorithms for NIST-approved PQC standards the moment they are fully ratified.

Craig Gidney’s research at Google Quantum AI serves as a definitive turning point. It demonstrates that the timeline to a cryptographically relevant quantum computer is not static; it is actively shrinking from both ends. Hardware engineers are steadily increasing qubit counts and stability, while quantum algorithm developers are aggressively reducing the amount of hardware required to execute the fatal blow to classical encryption. As the resource requirements plummet from the tens of millions into the hundreds of thousands, the theoretical debate concludes, giving way to an urgent, pragmatic race to secure the digital future before the first million-qubit machine boots up.